Key Points:

• There has been significant commentary on whether AI will replace white-collar workers en masse;

• Current AI architectures lack the organisational memory and judgement of a human worker. Models would need to change considerably to achieve this outcome;

• Even if the models do improve to achieve this, we think a more likely outcome will be increased production and productivity. Firms compete with each other and those that replace headcount with AI and hold production constant will be outcompeted by those firms which merge AI and humans;

• There will be frictions associated with AI adoption. Some workers will likely be displaced. Much of the income derived from productivity gains could flow to a narrow subset of the population.

• Regardless, history suggests that society as a whole is always better off with technological innovation and it is hard to argue that this time will be different.

At the risk of turning this into an AI blog, in this month’s Market Insight we address emergent fears that AI will become so capable and so widespread that it will displace vast swathes of the work force, leading to mass unemployment. There is no doubt in our minds that AI will be an increasing factor in the day-to-day workflows of many people. Whether it continues to improve such that it becomes an effective complete replacement for humans in large scale is uncertain, as is the impact on the labour market and the economy if AI actually achieves replacement quality. Given this uncertainty, in this Insight our aim is to highlight some potential paths forward, rather than being prescriptive about outcomes. Think of this as a thought experiment, rather than an outlook.

You Are More Than a Task Machine

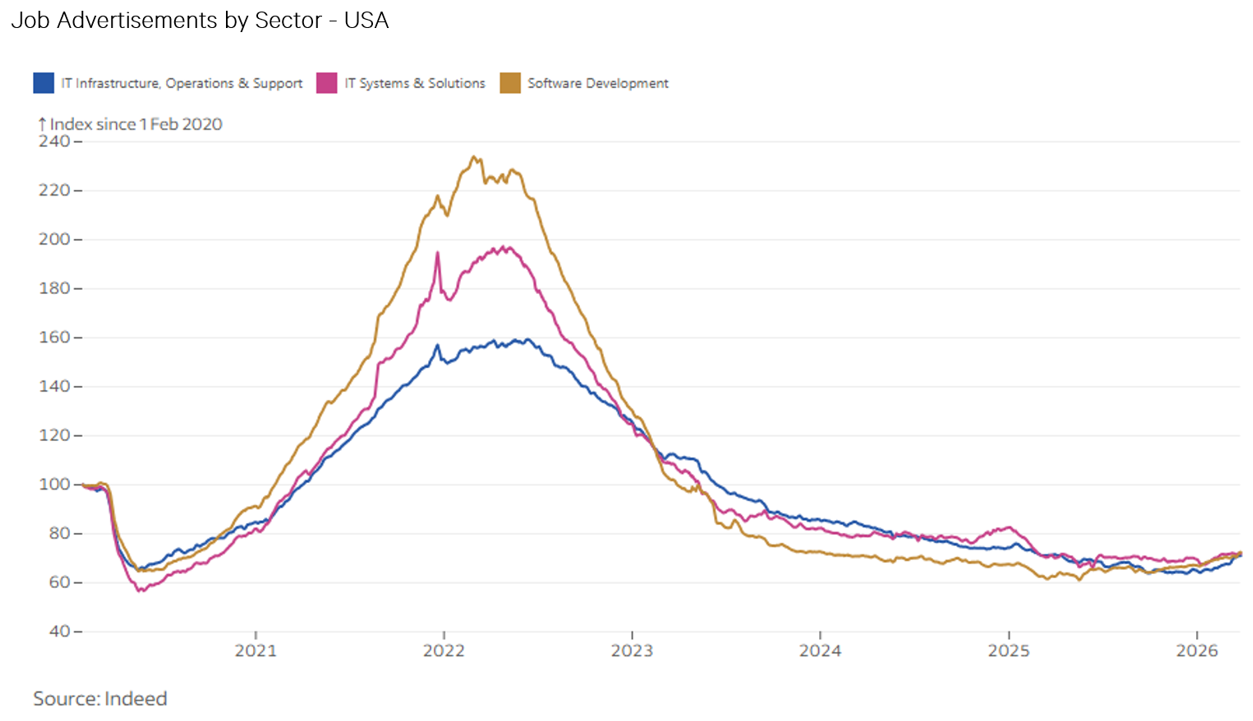

The one thing that is clear to us today is that frontier AI models in their current form are not even close to being a 1 for 1 replacement for a human. That may be a controversial statement given the rhetoric around AI, but we think today there are very few businesses in a position where they can remove an employee and switch on an AI subscription. The most obvious case in point is technology employment, particularly software. At the moment, the main thing AI is really good at is writing code. It follows that the first people to be replaced by AI would be software developers. The data, however, doesn’t back this up. Job ads for software developers in the US have actually risen slightly since the middle of last year.

There are a few reasons for this: (1) tasks vs jobs, (2) review bottlenecks and (3) institutional memory. AI is very good at tasks. Give it something to do and depending on how good you are at prompting and building skills, you will generate a pretty good outcome. However, very few jobs are just tasks, let alone one task which can be repeated over and over. Professional work is not just a sequence of cognitive tasks and the tasks that exist are often pretty random. Work involves building trust with clients, reading a room, knowing who on the team to ask which question, sitting through meetings that achieve nothing explicit but surface information that matters later, and the kind of casual hallway conversation that turns into a decision two weeks later. These are not tasks AI can be directed to complete.

This brings us to the second issue, the review bottleneck. At the moment, high productivity AI users are sending tasks off to AI agents, oftentimes in parallel, reviewing the output, and thinking of what that means for the next task. The review process is a bottleneck. There are only so many tasks a person can design and review the output of. The computer is still limited to the speed of the human.

The solution for that would be removing the human from the equation and directing AI to do the complete suite of tasks which make up a job, including decision making. This bumps into the third problem, institutional memory and knowledge. Humans are very good at stringing together context across multiple time horizons and dimensions and figuring out how to apply that to a task. AI cannot currently accumulate institutional knowledge the way humans can, nor can it recall this knowledge outside the equivalent of database text searches. This is all before we even attempt to consider decision making, collaboration and judgement – all things most jobs involve which AI in its current form isn’t going to replace.

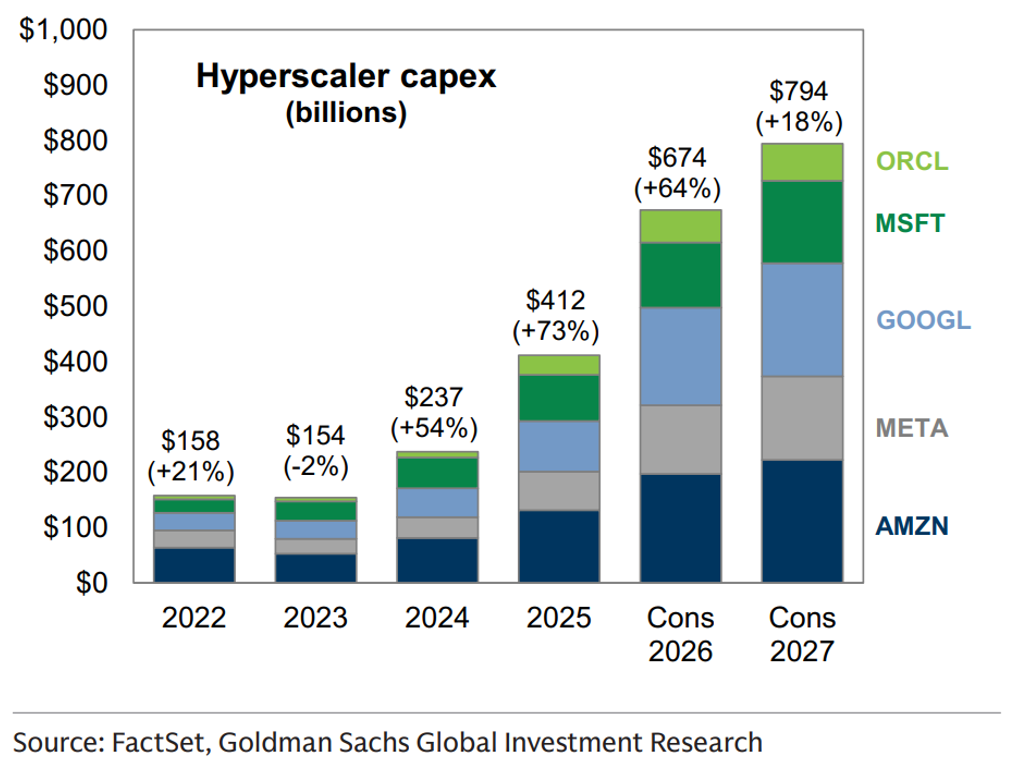

Human Memory Is Free

The above reflects models as they stand today and AI capability will continue to improve. $800 billion of annual data centre investment (see below) guarantees progress on the problems that improve with more compute and training. The question is whether the specific problems blocking human replacement are problems that can be solved with compute or training.

The gap between current AI and a human employee's institutional memory isn't one thing, it's several distinct technical problems. AI now has some retrieval functionality. It can look up facts from previous prompts and responses and incorporate that into current prompts. This is useful but it is essentially a better database, not memory. The model doesn't know things it has done before, it looks them up.

Turning experience into understanding is a separate problem. A human analyst who sees a particular client situation three times develops an intuition about how it tends to play out, what to watch for, and what usually goes wrong. That integration of experience into something like judgement is what distinguishes a senior professional from a junior one. Current models don't do this. Updating beliefs based on experience is also unsolved. The cognitive function that lets a human say "I used to think X, but after seeing Y happen three times, I don't anymore" doesn't really exist in current models. You can prompt a model to reason about what it used to think versus what it thinks now, but the next conversation starts fresh.

Judgement about what the problem actually is sits above this. A human professional spends a substantial part of their day deciding which problem to solve, which framework applies, what the real question is behind the stated question, and when the standard playbook doesn't fit the situation in front of them. AI is very good at solving problems that are well-defined. It is much weaker at recognising when a situation looks like the standard one but isn't.

Finally, there is the question of accountability. When a human makes a decision, there is a person who owns it, can be questioned about it, and bears the consequences if it goes wrong. Organisations run on this. It is not clear what the equivalent looks like for an AI making the same decision, or whether the people interacting with that AI will accept its outputs with the same trust they extend to a known colleague with a track record.

Each of these is a different AI research problem and each is at a different stage of maturity. Storage and retrieval are largely solved. Experience-into-understanding is active research with some promising directions. Belief updating, judgement about problem framing, and accountability are unsolved.

Maybe these problems get solved with ongoing research and investment, but the solution won’t be free. Compute costs money and new models incorporating more training data, context, memory and inference power will require more of it. Current AI models have a mathematical quirk: the cost of processing information grows quadratically rather than linearly as the context grows. Asking the model to consider 10x more background information doesn't cost 10x more, it costs closer to 100x more in underlying compute. On top of this, the latest generation of "reasoning" AI models (which think through problems step by step before answering) already consume 10 to 100 times more compute per query than the models that came before them. If AI costs a lot and needs to improve significantly to replace humans, perhaps its cost would approach that of a human worker in the first place.

It Is a Dog-Eat-Dog World

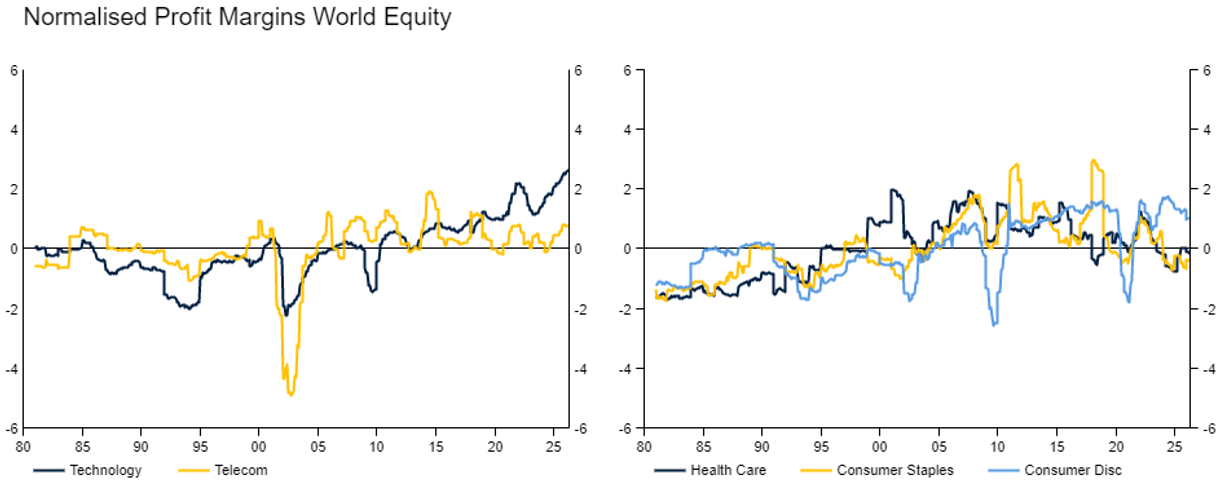

Leaving the question of whether AI can actually replace humans unanswered above, we now turn to another question, would it? Most businesses exist in some form of competitive landscape. This competitive landscape encourages the development of both cheaper and more innovative ways to provide goods and services for sale to customers. Following this innovation, the successful business normally has some period of excess profitability within their industry. They can use this to expand market share via more investment and production or return profits to owners. However, the good times never last forever. Competitors steal ideas, invest in capex, poach good staff or come up with their own innovations to match. The excess profits eventually get eroded away, the segment normalises. There are exceptions to the above. Innovation can be protected for long periods of time via patent, moats can be too large for new entrants to cross. Dominant companies can buy out new entrants etc.

Below we can see some examples of this at the sector level. Profit margins across sectors generally sit around a long run average with variations above and below which are unwound. At the individual business level, it is likely the argument holds even more strongly.

In the context of AI, the question becomes if a business can produce the same amount of output with 75%, or 50%, or 25% of the workforce, will they choose to do so, or will they expand production, provide new and better goods and services to their customers or attempt to grow market share? We think in a competitive environment where all have access to the same AI tools, they will find themselves pushed to do so. Businesses that try to shed labour and sit on big margins will be out competed. There will be some exceptions, but we think even in the hypothetical future where AI is something close to a full replacement for a human the dominant result will be more production rather than dramatically less employment.

Look to Windward

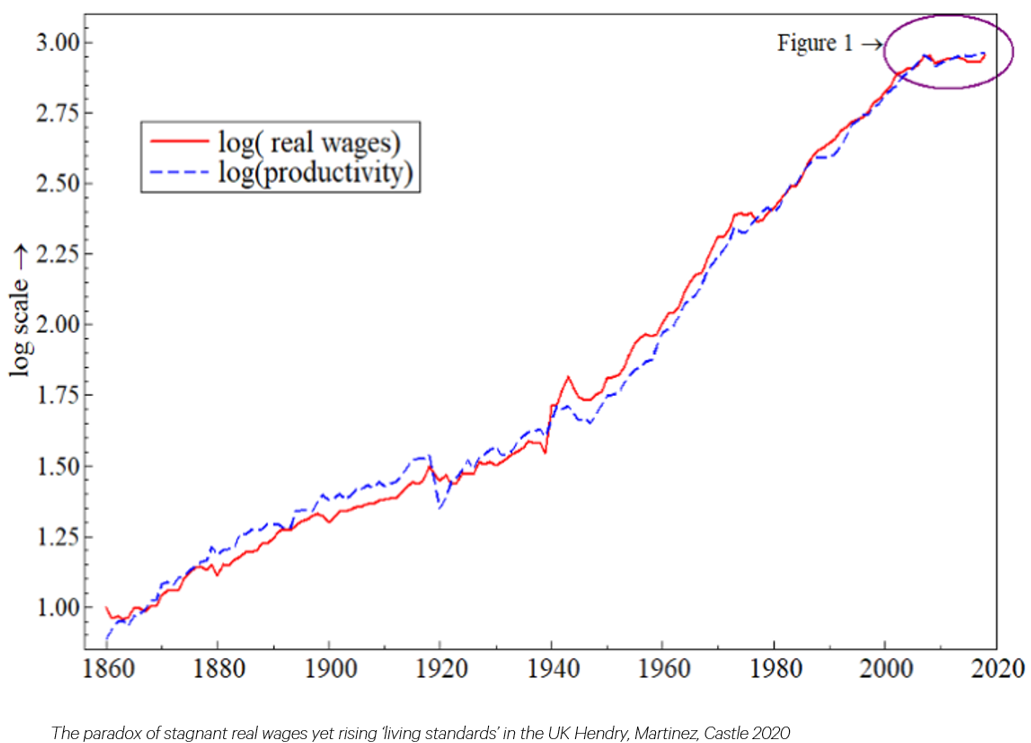

Historically, the gains from technological improvements have flowed to workers. Growth in real wages over the very long term has broadly matched productivity growth (see below). Our baseline view is that humans will be net beneficiaries from AI. Businesses will merge workers and AI to improve their business and capture market share. However, this journey isn’t without potential friction or risks.

An obvious friction is the potential for displaced workers. Even assuming AI isn’t a complete replacement for humans, and businesses merge the AI they have with humans, some people will inevitably be left behind. The automobile put horse-and-buggy drivers out of work. The personal computer displaced the typing pool and armies of secretaries. These innovations did also eventually create more jobs than they displaced. Many have argued that new entrants to the labour force will suffer the worst, with their jobs more task orientated and potentially easier to replicate with AI. This is a valid concern, but we would push back that many arguing this case seem to forget that these new entrants will also be able to use AI to make themselves more productive. They will have also grown up with AI and will have a higher baseline level of familiarity with the available tools than more established workers do today.

What does the world look like if that is indeed where we are heading? John Maynard Keynes (a legendary economist) wrote an essay almost 100 years ago entitled Economic Possibilities for Our Grandchildren. He predicted that by 2030, technological advancements and productivity gains would lead to a 15-hour work week, allowing humanity to focus on leisure and living wisely. He was clearly wrong on that front, although you could argue that many knowledge workers probably achieve 15 hours of productive work every week. He also predicted that living standards would rise four to eight times over the following century which has been broadly correct. Technological innovation has been around as long as humans. It has unambiguously been good for living standards and it has never led to mass unemployment, despite much of that innovation augmenting or replacing things humans used to do. Every time there is a new innovation there is concern about jobs disappearing and every time new jobs have appeared to replace those that were lost. Maybe this time is different, but it’s a bold claim to argue against hundreds of years of human history, especially when those boldly claiming the loudest are those selling AI.

Portfolio Positioning

Our portfolios are currently long growth exposure, but within equities we maintain a strong bias towards diversification across region and style. We expect AI associated companies to continue to do well, but they are quite expensive. Regular listed companies that are yet to fully adopt AI will benefit in the period ahead and are much more fairly valued. Owning both sides of the table seems sensible to us given the uncertainty around who benefits the most.

Prepared by Drummond Capital Partners (Drummond) ABN 15 622 660 182, AFSL 534213. It is exclusively for use for Drummond clients and should not be relied on for any other person. Any advice or information contained in this report is limited to General Advice for Wholesale clients only.

The information, opinions, estimates and forecasts contained are current at the time of this document and are subject to change without prior notification. This information is not considered a recommendation to purchase, sell or hold any financial product. The information in this document does not take account of your objectives, financial situation or needs. Before acting on this information recipients should consider whether it is appropriate to their situation. We recommend obtaining personal financial, legal and taxation advice before making any financial investment decision. To the extent permitted by law, Drummond does not accept responsibility for errors or misstatements of any nature, irrespective of how these may arise, nor will it be liable for any loss or damage suffered as a result of any reliance on the information included in this document. Past performance is not a reliable indicator of future performance.

This report is based on information obtained from sources believed to be reliable, we do not make any representation or warranty that it is accurate, complete or up to date. Any opinions contained herein are reasonably held at the time of completion and are subject to change without notice.